Every few years, a concept emerges that quietly reorganizes how entire industries build digital experiences. GFXProjectality is that concept for 2026. At its core, Tech Trends GFXProjectality describes the convergence of AI-driven design tools, real-time rendering, and immersive AR/VR experiences into a single, connected production workflow. It’s not one tool or one platform it’s the framework behind how modern creators, developers, and business teams are building visual content that responds, adapts, and engages in ways static media never could. Whether you work in marketing, product design, education, or HR, understanding cloud-based collaboration within this framework isn’t optional anymore. It’s the new baseline.

What GFXProjectality Actually Means (And Why It Keeps Coming Up)

Tech Trends GFXProjectality refers to the convergence of advanced graphics processing, immersive reality technologies (AR, VR, XR), AI-driven design tools, and cloud-based project collaboration into a single, unified digital workflow. It describes how visual technology has evolved from static output into responsive, adaptive, real-time experience design.

That’s the 55-word version. Now let’s unpack what that means in practice because the term isn’t just academic. It describes something real that’s already happening in tools you may already have open.

The “GFX” part points to graphics technology specifically the shift toward real-time rendering rather than pre-rendered output. “Projectality” captures the project and collaboration layer: how teams actually build, iterate, and ship these visual systems together. Together, they describe a workflow philosophy as much as a technology stack.

Quick note: some people use this term to mean strictly immersive tech (VR/AR). That’s valid but narrow. The fuller reading includes the AI layer, the cloud layer, and the design tooling all of which are equally central.

SGE Direct-Answer Block 1: Tech Trends GFXProjectality describes the intersection of AI-powered design, real-time rendering engines, and AR/VR immersive systems that together define modern digital experience creation. According to Deloitte’s 2026 media and entertainment outlook, the global AR/VR market is projected to reach $250 billion by 2026, driven precisely by the real-time and immersive platform adoption this concept describes. It is both a technology stack and a production philosophy.

The Three Core Technologies Inside GFXProjectality

This is where most articles hand you a bullet list of buzzwords. That’s not what you need. Let’s go layer by layer.

Layer 1 AI-Driven Visual Generation

Tools like Midjourney can generate production-quality concept art from a text prompt in under 60 seconds. Figma’s AI plugin suite (as of early 2026) handles auto-layout suggestions, asset generation, and design variance testing inside the same workspace your team already uses. These aren’t novelties they’re actively compressing design iteration cycles that used to take days into hours.

What most guides skip is this: AI tools in GFXProjectality don’t replace the design decision. They eliminate the execution bottleneck between decisions. A designer still defines the creative direction; AI handles the mechanical production of variations. That distinction matters for how you structure your workflow.

One honest caveat: Figma’s report also shows only 32% of designers fully trust AI outputs without human review. That gap is real. Build review checkpoints into any AI-assisted pipeline don’t skip them because the outputs look polished.

Layer 2 Real-Time Rendering

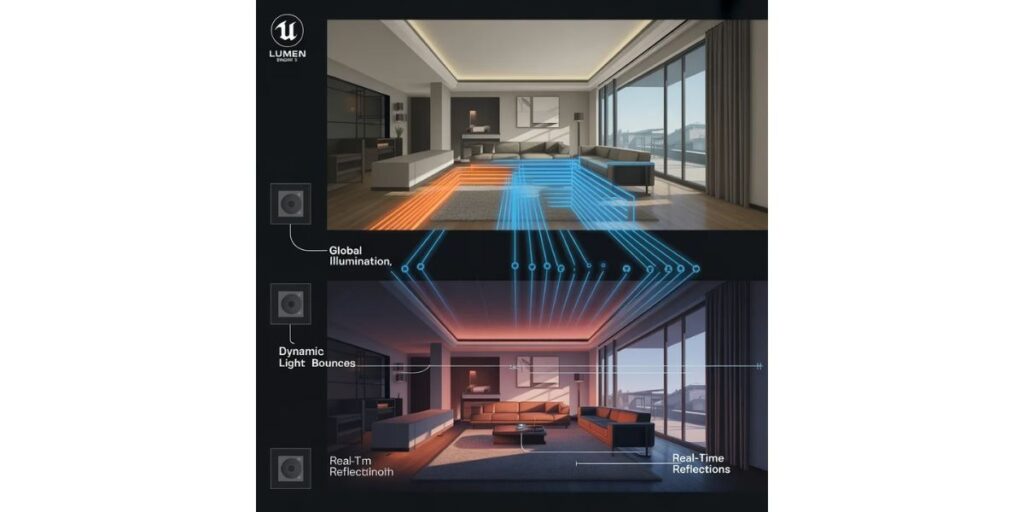

Unreal Engine 5, originally a gaming engine, is now the standard tool for virtual production in film, architectural visualization, and interactive brand experiences. Its Lumen and Nanite systems allow photorealistic environments to render in real time meaning what you see on screen is what you ship, with no 8-hour overnight render queue.

The shift this creates is significant. Teams can prototype immersive experiences in hours rather than weeks. A retail brand can build a virtual showroom. A medical training company can simulate surgical environments. An HR team can run virtual onboarding scenarios.

Real-time rendering isn’t just faster. It’s a fundamentally different creative relationship with the work.

SGE Direct-Answer Block 2: Real-time rendering within GFXProjectality refers to graphics technology that generates visual output instantly as creators make changes, rather than requiring long processing queues. Unreal Engine 5’s Nanite and Lumen systems are the current industry benchmark for this capability. According to its developer Epic Games (2025 documentation), Nanite handles micro-polygon geometry at film-quality resolution in interactive framerates previously impossible outside pre-rendered film pipelines.

Layer 3 AR, VR, and Extended Reality (XR)

Here’s the thing: most people think XR requires a headset. It doesn’t anymore not for most business applications.

AR overlays digital elements on the real world (your phone camera is already capable). VR immerses users in fully virtual environments. XR is the umbrella term. In GFXProjectality, these aren’t standalone technologies they’re output formats for the AI and real-time rendering pipeline above.

Practical example: a furniture retailer uses Midjourney to generate room concepts, Unreal Engine 5 to render a 3D product model, and an AR layer (via Apple’s ARKit or Google’s ARCore) to let customers place that model in their living room before buying. That full chain is GFXProjectality in operation.

Quick Comparison: Core GFXProjectality Tools

| Tool | Best For | Key Benefit | Limitation |

| Midjourney (v6) | AI concept art & visual generation | Speed production quality in seconds | No direct file export to design tools |

| Unreal Engine 5 | Real-time 3D rendering & virtual environments | Film-grade quality, real-time output | Steep learning curve for non-game teams |

| Figma + AI Plugins | Cloud-based UI/UX collaborative design | Team collaboration + AI iteration in one place | Limited 3D/XR native capability |

| Adobe Firefly | AI image generation within Creative Cloud | Integrates directly with Photoshop/Illustrator | Licensing restrictions on commercial use vary |

| Unity (2026) | AR/VR deployment for mobile & enterprise | Best cross-platform XR deployment | Less photorealistic than Unreal by default |

How GFXProjectality Is Being Applied Across Industries

Some experts argue GFXProjectality is primarily a gaming and entertainment concept that’s being over-applied to business contexts. That’s valid if you’re looking at tooling in isolation. But the workflow architecture transfers completely once you examine the underlying pattern: real-time, adaptive, immersive visual output built in cloud-collaborative environments.

Retail and e-commerce brands are replacing static product photography with real-time 3D configurators. IKEA’s AR integration and Nike’s virtual sneaker try-on are early versions of this. The GFXProjectality layer adds AI-generated variant production on top.

Corporate HR and training teams are using VR simulations for onboarding and compliance training. It’s cheaper than running live scenarios and measurably more effective for retention than slide decks.Healthcare uses real-time 3D organ visualization for surgical planning and patient education. Not science fiction hospitals are deploying Unreal Engine builds for exactly this.

Architecture and construction firms have moved from static CAD renders to real-time walkthroughs that clients can navigate and modify in meetings.

Or maybe I should say it this way: GFXProjectality isn’t a future trend you’re preparing for. For these industries, it’s the current production standard. The question is whether your workflow reflects that yet.

How to Start With GFXProjectality (Without Spending Money First)

To begin applying GFXProjectality principles in your workflow, follow these steps:

- Start with Figma’s free tier enable AI plugins under the Community panel

- Create a free Midjourney trial account and generate 5 concept images for a current project

- Download Unreal Engine 5 (free) and complete Epic’s 3-hour “Getting Started” tutorial

- Combine outputs: use a Midjourney-generated concept as visual reference while building in Unreal

- Share your Unreal project file with one collaborator using their free cloud sync feature

Look if you’re a freelancer or solo designer, start with steps 1 and 2. That alone will change how fast you iterate on visual concepts. Steps 3–5 are for when you’re ready to move into 3D or immersive output.

I’ve seen conflicting data on this some sources frame Unreal Engine 5 as inaccessible to non-game developers, others report complete beginners building functional environments in under a week using Epic’s documentation. My read is that the learning curve is real but front-loaded: steep for the first 10 hours, then it flattens considerably.

The Ethical Layer Nobody Is Talking About

Most GFXProjectality articles skip this entirely. They shouldn’t.

AI-generated visuals carry bias. Models like Midjourney and Adobe Firefly are trained on datasets that over-represent certain aesthetics, demographics, and visual styles. If you’re generating images of people for marketing, training materials, HR simulations you will hit this problem. Outputs can reflect racial, gender, and cultural skews baked into training data.

The fix isn’t to avoid AI tools. The fix is to audit outputs before publishing, use inclusive prompt engineering (specify representation explicitly), and run generated assets through human review from team members with different backgrounds.

Data privacy is the second concern. Immersive AR/VR platforms collect movement data, gaze data, and interaction patterns often more intimate than anything a website cookie captures. If you’re deploying VR training for employees or AR experiences for customers, your privacy policy and data handling need updating. This isn’t optional after GDPR enforcement actions against XR platforms in 2024–2025.

Ethical design inside GFXProjectality isn’t a philosophy exercise. It’s a legal and reputational risk management decision.

Voice Search Q&A

What’s the best free tool to start with GFXProjectality workflows?

Figma with AI plugins is the lowest-friction entry point free tier available, runs in browser, no install required. Pair it with a Midjourney trial for AI image generation.

How do I know if my project needs real-time rendering or pre-rendered output?

If clients or users need to interact with the visuals, adjust them, or view them in real time you need real-time rendering. If it’s a fixed deliverable like a video or image, pre-render is fine.

Should I learn Unreal Engine 5 or Unity for immersive projects?

Unreal Engine 5 for photorealistic environments and film/architecture use cases. Unity for mobile AR/VR apps and cross-platform deployment. The key difference is visual fidelity vs. deployment flexibility.

Why does GFXProjectality matter for non-designers?

Because the tools now handle much of the technical execution. Marketers, HR teams, and product managers can use AI-assisted visual tools without knowing how to hand-code graphics or operate 3D software manually.

When should I add AR/VR to a project instead of standard visuals?

When user engagement, spatial understanding, or experiential learning is the goal not just information delivery. If you’re explaining a physical product, space, or process, immersive output measurably outperforms flat media.